You tell an AI agent you are vegetarian. It finds three restaurants and books a table at the one with the best reviews. The next week, a different agent plans your team dinner. It books a steakhouse. You correct it. The week after that, a travel agent plans your trip to Tokyo. It builds an itinerary around the Tsukiji fish market.

Each agent is brilliant in isolation. Each one starts with total amnesia.

This is the state of AI agents in 2026. Systems that can reason across thousands of documents, generate working code from a description, and analyze a quarterly earnings call in seconds -- cannot recall that you told them something last Tuesday. Every conversation is Groundhog Day. Every agent you speak to is a stranger you have already met.

The gap between a tool and a teammate is not intelligence. It is memory.

The amnesia is a design choice

The original architecture of large language models assumed a single conversation. A user sends a message, the model responds, the exchange either continues or ends. There is no tomorrow. The context window is the entire universe.

When engineers realized users wanted persistence, the first solution was the obvious one: append a flat list of facts to the system prompt. "The user prefers dark mode." "The user's name is Alice." ChatGPT's memory works this way. So does Gemini's. A growing list of key-value pairs, injected before every response, with no structure, no source attribution, and no way to know which application learned which fact.

This works for a single assistant. It does not work when agents multiply.

The moment you have more than one agent -- a research agent, an email agent, a scheduling agent, a finance agent -- the flat list becomes a liability. Every agent sees everything. The health agent's notes about your medication are visible to the marketing agent drafting your LinkedIn post. The food agent's knowledge of your allergies sits next to the code agent's memory of your preferred testing framework. There is no hierarchy, no boundary, no provenance.

The question is not whether AI agents should have memory. Of course they should. The question is: whose memory is it?

Three tiers of remembering

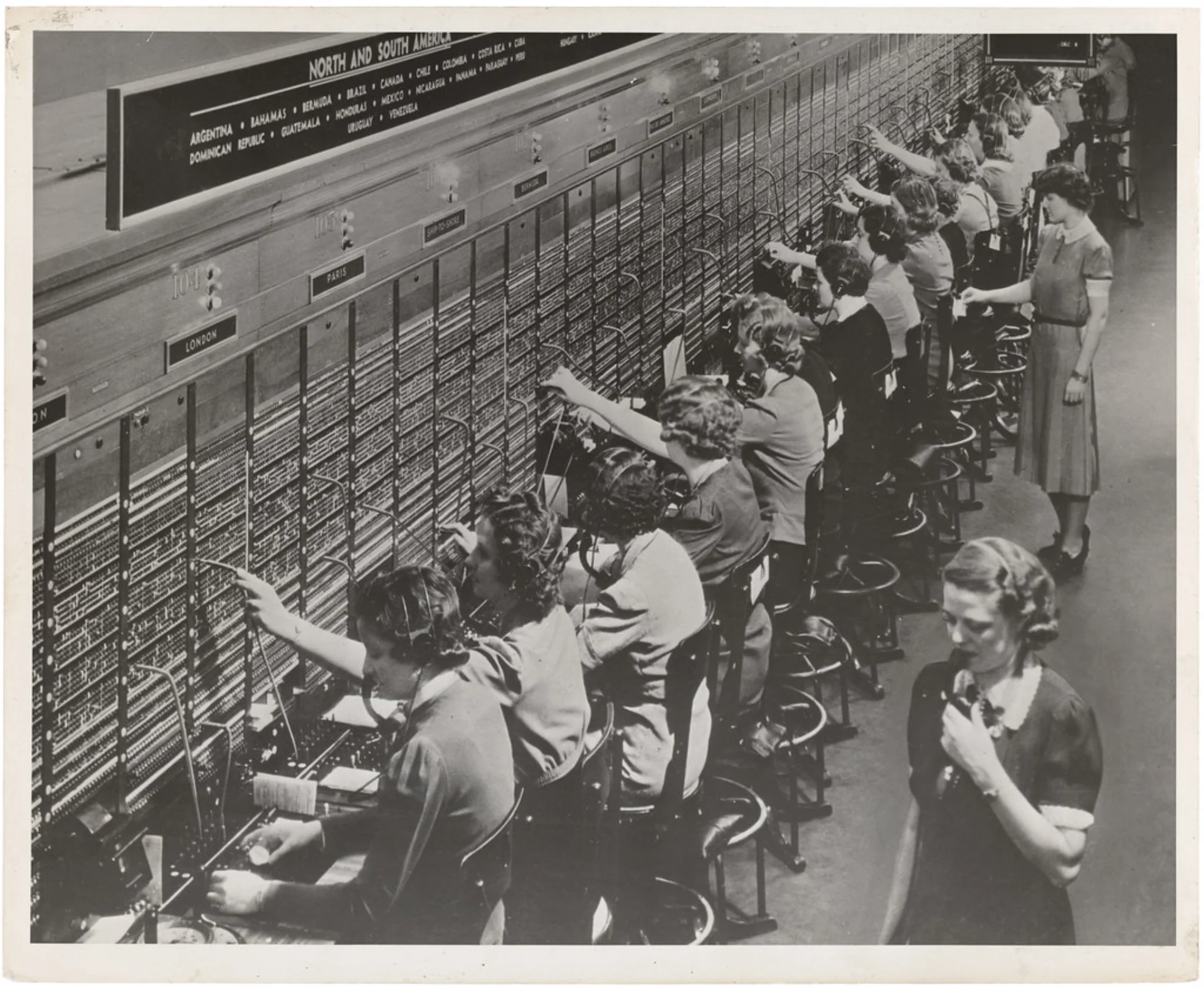

Every organization that has ever functioned solves the same memory problem with the same three-tier structure, independently, every time.

HR knows who you are. Your name, your dietary restrictions, your emergency contact. This information belongs to you, not to any department. When you transfer from engineering to product, the company does not forget your phone number. When a new manager joins, they do not ask you to re-enter your address.

Each department knows its own work. The engineering team knows the deployment schedule. The finance team knows the budget cycle. This knowledge is specific to the function, persists across individual conversations, and is not automatically shared with every other department.

Meetings are ephemeral. The conversation in a standup is useful in the moment. Some of it gets captured in notes. Most of it evaporates, and that is fine. Not everything needs to be remembered. The meeting is not the memory.

Rush implements this structure as a literal three-tier memory model.

Tier 1 · User memory

Global. Shared across all agents. Your name, preferences, dietary restrictions. Belongs to you, not to any agent.

~/.rush/user_memory.yaml

Tier 2 · Agent memory

Per-agent. Persistent across sessions. The research agent's topic history, the email agent's draft patterns, the finance agent's portfolio state.

~/.rush/agents/{slug}/memory.db

Tier 3 · Session memory

Per-conversation. The current exchange. Automatically compressed when it exceeds the context window. Most of it is meant to be forgotten.

~/.rush/sessions/{id}/messages.jsonl

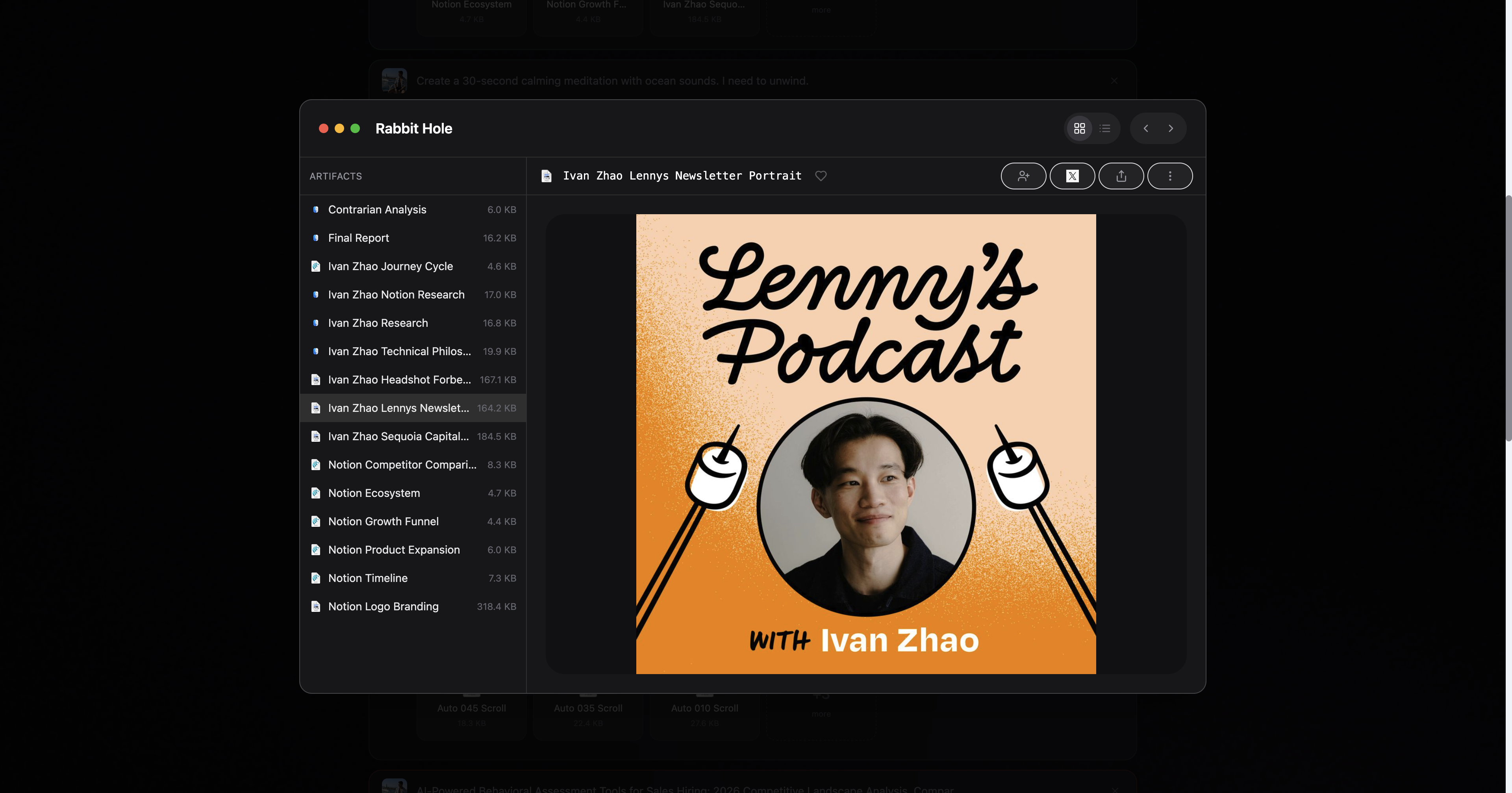

Rush makes the memory model legible in practice: agents produce durable artifacts, save them locally, and pick up from what already exists instead of starting over from zero.

Tier 1 flows down. When the food agent runs, it checks user memory first -- before asking you a single question. If a previous agent already learned you are vegetarian, the food agent knows. You said it once. Every agent benefits.

Tier 2 stays contained. The research agent's memory of which papers it has already analyzed does not leak into the email agent's context. Each agent accumulates expertise in its domain without polluting others.

Tier 3 is disposable by design. The session memory uses a hybrid context manager that automatically prunes, summarizes, and truncates as the conversation grows. Not everything deserves to be permanent. The system decides what to compress and what to keep, the way your own mind forgets the color of the restaurant walls but remembers what you ordered. That same distinction between fluent output and durable structure is why the gap between chat intelligence and shipped work keeps widening, as we argued in The Missing Piece of the Intelligence Revolution.

The three tiers are not a technical convenience. They are a claim about the structure of useful knowledge: some things are about you, some things are about the work, and some things are about the moment. If you want the broader frame for why that matters, this is also what turns software from an app into an agent operating system.

Provenance, or: who told you that?

Here is the part most memory systems get wrong. They store the fact but not the source.

A flat memory entry says: User is vegetarian. That is a fact with no history. You cannot ask when it was recorded, which agent recorded it, or whether it was something you stated explicitly or something an agent inferred from your restaurant choices. If it is wrong, you cannot trace the error. If you want to revoke it, you revoke everything or nothing.

Rush stores every memory with provenance metadata:

public:

dietary_preference:

_value: ["vegetarian"]

_source:

_agent: prix/daily-eats@1.0.0

_created: 2025-01-15T11:00:00Z

_updated: 2025-01-15T11:00:00Z

name:

_value: Alice

_source:

_agent: user

_created: 2025-01-15T10:30:00Z

_updated: 2025-01-15T10:30:00Z

Every leaf node in the memory tree carries three pieces of metadata: which agent wrote it, when it was first created, and when it was last updated. The _agent field uses a fully qualified identifier -- developer/slug@version -- so you know not just that "some food agent" learned your dietary preference, but that it was specifically version 1.0.0 of the Daily Eats agent, published by Prix.

The difference matters. When a value's source is user, it means you entered it directly. When the source is an agent identifier, it means the agent inferred or learned it during a session. You can delete everything a specific agent learned about you without touching anything you entered yourself. You can see the full audit trail of your own profile -- which agent contributed which fact, and when.

This is not a novel invention. It is a pattern every trusted institution already uses.

In medicine, a chart entry is not just "Patient has hypertension." It is "Dr. Patel noted hypertension on March 3, 2024, based on three consecutive readings above 140/90." The provenance is the trust. A diagnosis without a source is a rumor.

In software, git blame does not just show you the current state of a file. It shows you who wrote each line and when. The code is the fact. The blame annotation is the provenance. When a line is wrong, you know who to ask and what they were thinking.

In accounting, double-entry bookkeeping has required transaction sources since Luca Pacioli formalized the system in 1494. Every debit has a corresponding credit. Every entry has a date, a source document, and an author. Five centuries later, the principle is unchanged: a number without provenance is not accounting. It is guessing.

Memory without provenance is surveillance. Memory with provenance is trust.

The architectural consequence is that memory becomes a hierarchical tree rather than a flat list. User memory in Rush is a YAML tree where each node can have children, and every leaf carries its own source metadata. The path work.employer.name stores the company name; work.employer.website stores the URL. Each was potentially contributed by a different agent at a different time. The tree structure means related facts cluster naturally, and provenance travels with every individual fact, not with the collection.

The merge problem

Memory gets interesting -- and hard -- when it lives on more than one device.

You use Rush on your laptop at work. You use Rush on your phone during your commute. The research agent on your laptop learns that you are interested in transformer architectures. The email agent on your phone learns that you prefer concise replies. Both agents write to your user memory. When the two devices sync, whose version wins?

This is the distributed systems problem applied to personal knowledge. The classic approaches are last-write-wins (simple, loses data), operational transforms (complex, correct), and CRDTs (convergent, expensive). Rush takes a hybrid approach: timestamp-based three-way merge at the leaf level.

When the client pushes memory to the cloud, the server compares each leaf node's _updated timestamp against the existing server copy. If the client's timestamp is newer, the client wins. If the server's is newer, the server wins. Ties go to the client, because the user's most recent device is the most likely source of truth.

The merge happens at each individual leaf, not at the document level. If your laptop updated work.employer and your phone updated dietary_preference, both changes survive. Conflict only arises when both devices modified the exact same leaf in the same time window -- which, in practice, almost never happens, because different agents on different devices are working in different domains.

This matters because the alternative -- requiring a network connection for every memory write -- makes the system unusable on planes, in subways, and in any environment where connectivity is intermittent. Memory must be local-first and cloud-synced, not cloud-dependent. The phone should work on airplane mode. The sync should be invisible.

When memory goes wrong

Memory systems fail. The failures are instructive.

The agent remembers wrong. A food agent infers from your order history that you eat fish. You do not. You ordered fish once, for a guest. The agent recorded an inference as a fact. This is the most common failure mode, and provenance is the defense: because the entry shows which agent wrote it and when, you can trace the error to the specific session where the inference was made, correct it, and move on. Without provenance, you would not know which of your fifty memory entries is the lie.

The agent remembers too much. A research agent records every search query, every abandoned thread, every dead end. Your memory file grows to thousands of entries, most of which are noise. This is the session memory problem masquerading as a user memory problem. Session-scoped work should stay in Tier 3, where it is automatically compressed and eventually forgotten. The agent that promotes every ephemeral observation to permanent memory is the colleague who cc's the entire company on every email.

The agent shares what it should not. Your health agent knows about a medical condition. Your email agent, drafting a message to a colleague, references it. The boundary between agents was not enforced. This is why Rush separates user memory (global, visible to all agents) into public and private sections. The user decides what is shared. The architecture enforces the boundary. Private memory is excluded from other agents' context, the same way medical records are excluded from your credit report -- not by policy alone, but by the system's structure.

Each failure has the same root: the system stored something without enough metadata to govern it. The fact alone is not enough. You need the source, the scope, and the boundary. Provenance, tiers, and visibility controls are not features. They are the structural prerequisites for memory that does not become a liability.

The compounding effect

Something compounds when the memory architecture is right.

An agent that remembers correctly does not just avoid repeating mistakes. It starts each interaction further along. The research agent that knows you have already read the foundational papers on transformer attention does not waste your first ten minutes re-establishing context. It picks up where the knowledge frontier actually is. The email agent that knows your voice -- your preference for short sentences, your aversion to exclamation marks, your habit of ending with a question -- drafts messages that need one edit instead of five.

This is compound interest applied to interaction quality. Each session deposits a small amount of accurate, well-sourced knowledge. The next session starts from a higher baseline. Over weeks and months, the difference between an agent with memory and an agent without is not incremental. It is categorical.

A colleague you have worked with for a year does not ask you how you like your reports formatted. They know. That knowledge was not transferred in a single onboarding session. It accumulated through dozens of interactions, each one depositing a thin layer of understanding. The colleague who asks you the same formatting question every month is not unintelligent. They simply have no memory. You would stop working with them.

The same is true for agents. The ones that accumulate understanding become indispensable. The ones that start fresh every time remain tools you tolerate.

An agent becomes useful when continuity stops being hidden. The files, the artifacts, and the work history all stay on your machine, where you can inspect them.

The system beneath the system

Most discussions of AI memory focus on retrieval: how do you find the right memory at the right time? Vector databases, embedding models, semantic search. These matter.

The harder problem is upstream. What gets stored. Who stores it. What metadata accompanies the storage. Get retrieval wrong and the agent occasionally misses a relevant fact. Get the storage model wrong and the agent becomes a system you do not trust with your information.

The three-tier model is a claim about information architecture: some knowledge is universal (Tier 1), some is domain-specific (Tier 2), and some is ephemeral (Tier 3). Provenance is a claim about accountability: every fact must have an author. Visibility controls are a claim about boundaries: not every agent should see everything.

Together, they form the preconditions for a system where agents get better the more you use them, without becoming a privacy risk the more they know. That balance -- usefulness and trust scaling together -- is the entire design problem of agent memory. Most systems sacrifice one for the other. Flat memory lists maximize short-term usefulness by storing everything, everywhere, with no structure. Privacy-first systems that store nothing protect the user at the cost of eternal amnesia.

The answer is not a compromise between the two. It is a system where the structure of memory itself -- tiers, provenance, boundaries -- makes both possible simultaneously. The more the agents know, the more useful they become. The more metadata accompanies what they know, the more the user trusts them. These are not opposing forces. They are the same force, measured on two axes.

Every application you use daily has already solved this. Your email client remembers your contacts. Your browser remembers your passwords. Your maps application remembers your home address. Each one stores structured, scoped, user-controlled memory. AI agents are the most sophisticated software ever built, and they are the last to figure this out. The consumer version of that future is not "more chat." It is software that behaves more like a teammate, which is the promise behind Agents, for the Rest of Us.

The ones that do will not feel smarter. They will feel like they know you.

Rush's memory system is open and inspectable. User memory lives in ~/.rush/user_memory.yaml on your machine. You can read it, edit it, or delete it with a text editor. Agent memory lives in ~/.rush/agents/{slug}/memory.db. Session memory lives in ~/.rush/sessions/{id}/messages.jsonl. There is no hidden database, no opaque cloud store, no memory you cannot see. The system that remembers you is a system you can audit, and that is the point.