The cost of intelligence collapsed 280x in 18 months. Yet most people are still... chatting.

Update (April 2026): Since this was published, the gap described here has widened. Opus 4.6 and GPT-5.4 both ship with million-token contexts and autonomous desktop control. Five desktop agent products now compete at $20/month: Claude Cowork, ChatGPT Atlas, Manus, Genspark, Perplexity Computer. MCP became an industry standard under the Linux Foundation. The models got dramatically better. The interface question got more urgent, not less.

Something strange is happening in AI.

The models have gotten absurdly good. Claude Opus 4.5 scored 80.9% on SWE-bench, the first to clear 80% on real engineering tasks. Boris Cherny, who built Claude Code at Anthropic, recently shared that he landed 259 pull requests in 30 days. Every single line was written by Claude. He doesn't write code anymore. He guides it.

Meanwhile, over the holidays, developers discovered what Opus 4.5 could actually do. The reaction was visceral. "This is the first model that makes me actually fear for my job," wrote one engineer on Reddit, collecting nearly a thousand upvotes. They're calling it getting "Claude-pilled," that moment when you hand your work to the AI and realize, as one of them put it, you're watching "a thinking machine of shocking capability."

And yet.

Most people are still using AI like it's a search engine with attitude. A slightly smarter autocomplete. A chatbot you ask questions to, one at a time, waiting for each response before typing the next query.

There's a gap here. A massive one. And it's not about the models.

The Collapse No One Feels

Let's talk numbers.

According to the Stanford AI Index 2025, the cost of querying an AI model equivalent to GPT-3.5 dropped from $20 per million tokens in November 2022 to $0.07 by October 2024. That's a 280-fold reduction in eighteen months.

Epoch AI's research found that depending on the task, inference prices have fallen anywhere from 9x to 900x per year. And the trend is accelerating. Before January 2024, the median annual decline was 50x. After January 2024, it jumped to 200x.

To put this in perspective:

Annual cost reduction. Moore's Law doubles every 18 months. AI inference drops 200x per year.

We're watching decades of economic shift compressed into quarters.

This should feel like a revolution. For most people, it doesn't.

The Two Worlds

Here's what I see every day:

World A

An engineer opens ChatGPT. Types a question. Waits. Reads the response. Types a follow-up. Copy-pastes some code. It doesn't work. Back to ChatGPT to explain what went wrong. Twenty minutes later, they're debugging the conversation, not the code. This is how most people use AI.

World B

A different engineer spins up eleven agents (Gemini, Codex, Claude) in parallel. An orchestrator allocates twenty bugs across them simultaneously, routing work so dependencies resolve smoothly. Some agents tackle independent issues. Others coordinate on shared code. When conflicts arise, agents work them out together. If they can't, the orchestrator resolves it at the end and verifies everything works. The engineer doesn't write code. They orchestrate systems that write code.

Same underlying models. Same API prices. Radically different outcomes.

The gap between these two worlds isn't 10%. It's not even 10x. It's closer to 100x. And it's widening every month as the models improve and the tools... don't.

Why the Gap Exists

The problem is the interface.

Not just the buttons and text boxes, although that's part of it. The entire product experience. The input and output mechanisms through which human and machine collaborate.

Right now, that layer is a maze. To get from "I want a competitor analysis" to an actual competitor analysis, you need to choose a model, write a system prompt, connect a web browsing tool via MCP, pipe the results into a second model for synthesis, manage the context window so it doesn't truncate your sources, and format the output yourself. Six engineering decisions for one research task. Most people stop at step one and just ask ChatGPT.

But ChatGPT has two hundred million users. They type questions, get answers, and come back tomorrow. The interface isn't broken. It's the most successful product launch in the history of software. Why fix what works?

Because chat works for asking. It breaks down for working. The moment you need AI to research a topic across multiple sources, draft something from the findings, and send it to the right people, you're back to being the integration layer. One thread. One task. One thought at a time. Two hundred million people asking questions is not the same as two hundred million people directing work.

When intelligence costs $20 per million tokens, that's fine. You use it sparingly, for important questions.

When intelligence costs $0.07 per million tokens, essentially free, the constraint flips. The bottleneck is no longer "can the AI do this?" It's "how do I direct enough AI at enough problems simultaneously?"

Chat doesn't scale. You can't run twelve chat windows and context-switch between them productively. You can't spawn a research team in ChatGPT while a coding team works in parallel. The current product experience wasn't built for abundance. It was built for scarcity.

From OS to OS

Here's the shift that's coming: from Operating System to Orchestration System.

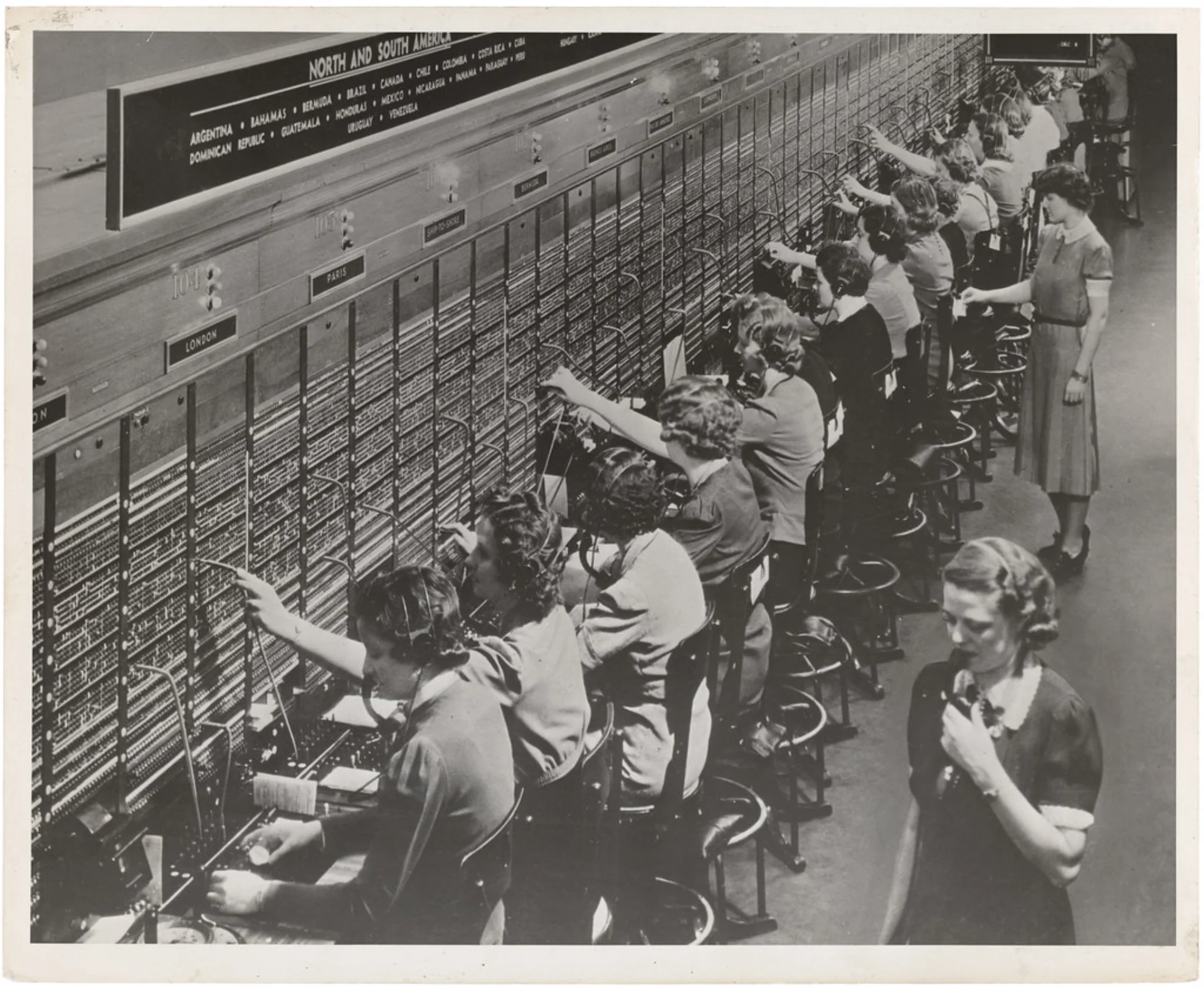

For decades, the operating system was about managing hardware resources like CPU cycles, memory, and disk access. The user was the source of intent, and the computer was the executor of instructions.

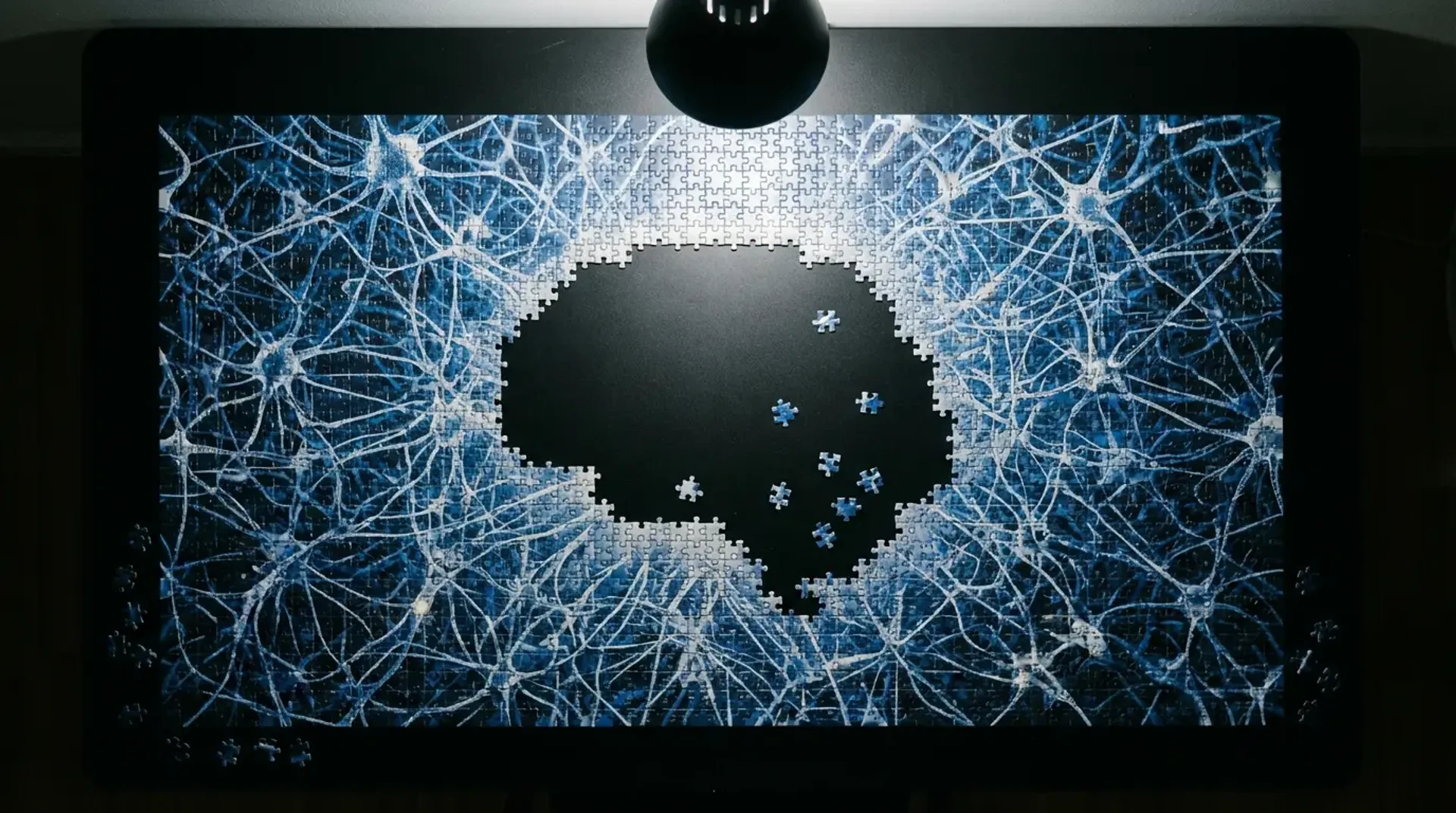

In the intelligence age, the operating system needs to manage cognitive resources. The user is still the source of intent, but now there are potentially dozens of agents that can reason, plan, and execute. The computer isn't just an executor anymore. It's a team.

This requires a fundamentally different interface. Not a chat window. Not a copilot that suggests the next line of code. An orchestration layer that lets you spin up agents, assign them tasks, coordinate their work, and synthesize their outputs. Rabbit Hole runs research in parallel while Inbox Ninja keeps communication clean.

(See The AX Paradigm for a deep dive on agent interfaces.)

Opus 4.6 and GPT-5.4 ship with million-token contexts. Inference costs almost nothing. MCP is an industry standard under the Linux Foundation. Everything is in place except the thing that makes it usable.

The Intelligence Gap

There's a term economists use: "technology diffusion." It describes how innovations spread through a population. First the pioneers, then the early adopters, then the mainstream. The gap between pioneers and mainstream can be years, sometimes decades.

With AI, the gap is forming in real-time. Some people are running agent swarms, extracting 100x the value from the same models everyone has access to. Most people are chatting, getting maybe 1% of what's possible.

This isn't about intelligence or technical skill. It's about tools. The pioneers built their own orchestration systems, or cobbled them together from developer frameworks. The mainstream is waiting for tools that don't require a PhD in prompt engineering to use.

The intelligence revolution has arrived. The economics prove it. The benchmarks prove it. The developers who "fear for their jobs" prove it.

The missing piece isn't smarter models. It's the interface that lets everyone participate.

Sources

- Stanford HAI: AI Index 2025

- Epoch AI: LLM Inference Price Trends

- Anthropic: Claude Opus 4.5

- Boris Cherny's Claude Code Workflow