Update (March 2026): Since this post was published, Apple has effectively confirmed its thesis. The Siri overhaul was delayed again past Spring 2026, with internal testers reporting features "not all working reliably." Craig Federighi admitted the hybrid architecture (merging old command-based Siri with a new LLM) didn't work, forcing a rebuild from scratch. Apple then signed a $1B/year deal with Google to license Gemini as Siri's foundation model. And as of this week, Bloomberg reports Apple plans to open Siri to rival AI assistants (ChatGPT, Gemini, Claude) in iOS 27. Apple is turning Siri into exactly what this post argues for: a coordination layer that routes to specialists, not a single agent that does everything.

Siri launched in 2011. Alexa in 2014. Google Assistant in 2016. The best AI engineers on earth, backed by the richest companies in history, working on the same problem for over a decade.

None of them can reliably set a reminder AND check your calendar AND draft an email in one interaction.

After 15 years, the most common use case is still setting timers and alarms.

This isn't a talent problem. It's an architectural one. And every major tech company is about to repeat the same mistake with LLMs unless they understand why.

The Generalist Ceiling

The assumption behind every single-agent assistant is the same: build one model smart enough to handle everything. Make it better every year. Eventually it'll be good enough.

The reality: general-purpose systems hit a ceiling where breadth kills depth.

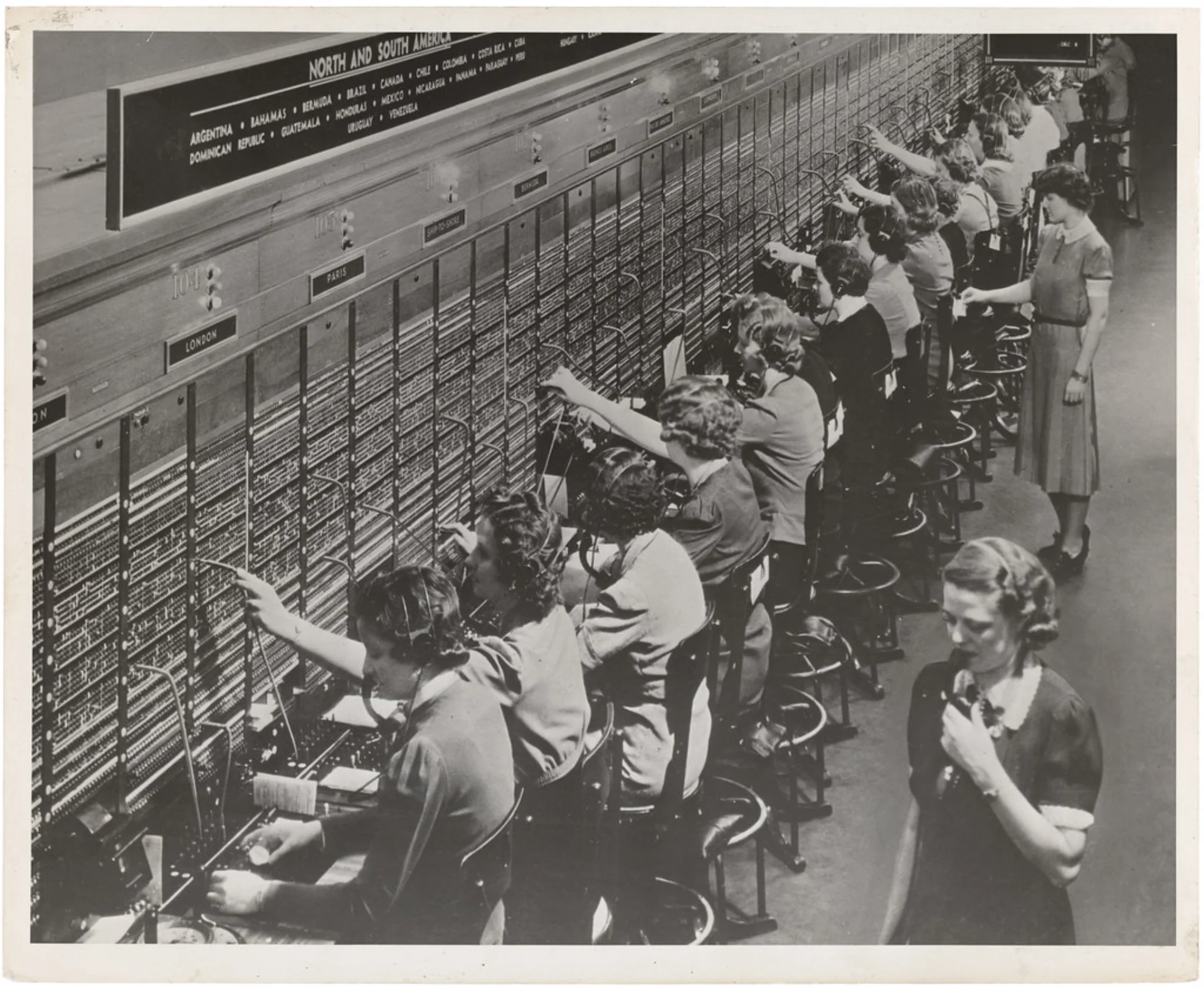

Medicine learned this a century ago. It didn't advance by making GPs smarter. It advanced by specializing, from 84% GPs in 1931 to 40 specialties and 89 subspecialties today. The GP still exists, but their job changed: they became the coordinator, routing patients to the right specialist.

Medicine advanced by specializing, not by making GPs smarter. AI is due for the same shift.

A single agent that tries to handle email, calendar, files, music, smart home, navigation, and messaging is the medical equivalent of one doctor practicing every specialty simultaneously. It's not a scaling problem. It's a category error.

Why "Just Make It Smarter" Doesn't Work

The architectural problem is fundamental. A single agent maintaining context across dozens of domains means the context window becomes a battlefield. Every domain competes for attention. Email context crowds out calendar context. Navigation instructions interfere with music preferences. Smart home state collides with messaging history.

The agent optimizes for the average case and excels at nothing. It becomes the median of its capabilities rather than the maximum. Adding more parameters, more training data, more compute -- none of it solves the structural problem. You're making a bigger generalist, not a better system.

Contrast this with how the best human organizations work. A CEO doesn't answer support tickets, write marketing copy, debug code, and negotiate contracts in the same afternoon. They have a team. The CEO's job is intent and coordination. The team's job is execution. The quality of the organization doesn't depend on how much the CEO personally knows about each domain. It depends on the quality of the specialists AND the quality of the coordination layer.

Single-agent assistants put the CEO on the support desk.

If you want the vocabulary for that coordination layer, start with what an Agent OS actually is: not a smarter chatbot, but the system that gives specialists shared memory, tools, and permissions.

The Orchestration Alternative

Here's how the alternative works. You say: "Prepare for my meeting with Acme tomorrow."

Single-agent approach

The assistant tries to search your calendar, find relevant emails, locate related documents, and summarize your notes, all in one pass. It finds the meeting. It pulls up two of the seven relevant emails. It misses the shared document entirely because the context window is already saturated with calendar data. It produces a passable but incomplete summary. You spend 15 minutes filling in the gaps yourself.

Orchestrated approach

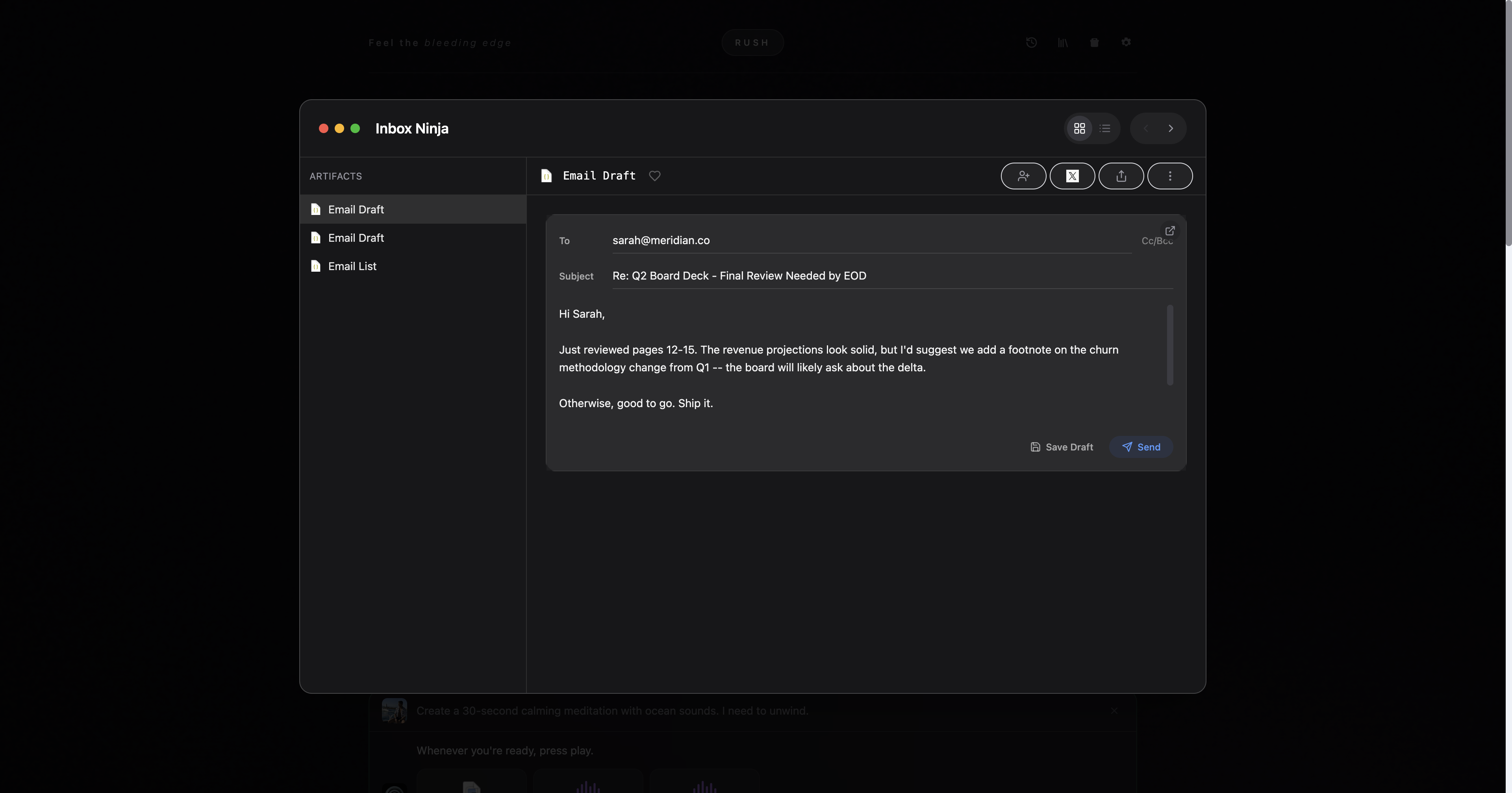

A coordinator identifies the task and dispatches three specialists. A calendar agent finds the meeting, pulls attendee details, checks the agenda, and flags that the time was moved twice. An email agent surfaces the entire thread that led to this meeting, including the attachment Sarah sent last Tuesday with revised pricing. A research agent pulls Acme's recent press releases. They just closed a funding round, which changes your negotiation position.

With the single agent, you spent more time fixing the preparation than preparing yourself.

With the orchestrated system, you didn't prepare at all. You reviewed a briefing.

Each specialist goes deep in its domain. The coordinator synthesizes results into a single briefing.

The output isn't incrementally better. It's categorically different. The single agent gives you a reminder. The orchestrated system gives you preparation.

That shift from one generalist to a team of specialists is the same pattern behind multi-agent systems: separate agents go deep, then hand structured output back to a coordinator.

Why This Is Happening Now

If orchestration is so obviously better, why hasn't it already won? Because until recently, it wasn't feasible. Three things converged in 2025-2026 that changed the equation.

Models got cheap enough. Running five agents in 2023 cost $0.50 per task. By late 2025, cost had dropped up to 900x. Five agents now cost less than one interaction did two years ago.

Protocols emerged. Anthropic open-sourced MCP. Google launched A2A. Standard protocols turned agent orchestration from a research project into an engineering problem.

Hardware got fast enough. Apple's M-series chips can run multiple agents locally. Lower latency. Better privacy. No per-request API costs for the coordination layer.

None of these conditions existed when Siri launched. The single-agent architecture wasn't a bad choice in 2011. It was the only viable choice. But it's a choice now. And it's the wrong one.

The Coordination Layer Is the Product

When this post was first published, Apple's revamped Siri (delayed to Spring 2026) looked like a better single agent. Smarter model. More integrations. Deeper system access. Still one agent trying to do everything. A better GP, not a hospital.

Then reality intervened. The hybrid architecture failed. The launch slipped again. Apple signed a $1B/year deal to license Google's Gemini as Siri's foundation. And now they're opening Siri to third-party AI, routing queries to ChatGPT, Gemini, and Claude. The company that spent 15 years building the definitive single agent is pivoting to orchestration.

The next decade of AI assistants won't be defined by which company has the smartest model. It'll be defined by who builds the best orchestration layer, the coordination infrastructure that lets specialized agents work together on tasks no single agent can handle well. Apple just bet its assistant strategy on exactly this.

The parallel to computing history is exact. The value of an operating system was never in any individual program. It was in the ability to run many programs, manage their interactions, and present a unified experience to the user. The OS was the coordination layer for software. An agent OS is the coordination layer for intelligence. If you want the memory side of that argument, Your Agent Doesn't Know Your Name follows the same problem from a different angle.

The era of the single genius assistant is ending. The era of coordinated specialist teams is beginning.

Prediction: By 2028, every successful AI product will run multiple coordinated agents behind the scenes. The ones that don't will cap out at "set a timer."

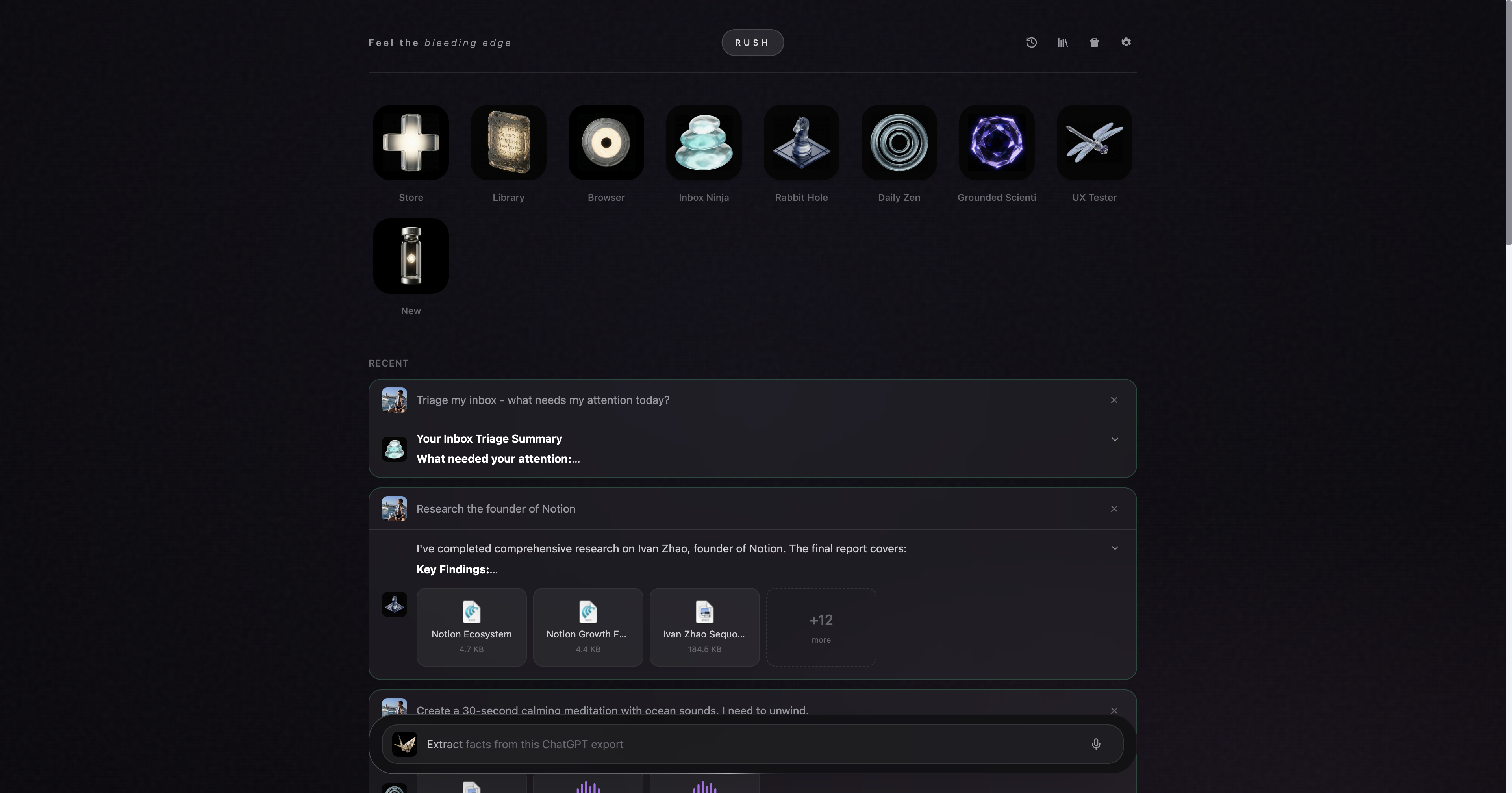

Rush is the coordination layer for personal agent teams. Learn more or browse the agents.

The trap is thinking you need a smarter Siri. You don't. You need the stack.